A modern data warehouse architecture is designed to efficiently manage, process, and analyze vast amounts of structured, semi-structured, and unstructured data. The architecture has evolved to support cloud-native platforms, real-time analytics, and AI-driven insights.

🏷️A Hybrid Modern Data Warehouse is a flexible architecture that combines both on-premise and cloud components, allowing organizations to leverage the best of both environments for data storage, processing, and analysis. It provides the ability to integrate multiple data sources, process data at scale, and generate real-time or batch insights, while maintaining cost-effectiveness, data security, and compliance.

🏷️A Hybrid Modern Data Warehouse architecture provides the scalability, flexibility, and performance required for modern data analytics, supporting real-time insights, machine learning, and big data processing across various industries.

Below is a typical modern data warehouse architecture consisting of several layers, technologies, and components:

🕹️Data Sources (Ingestion Layer): The ingestion layer focuses on bringing in data from various sources, including:

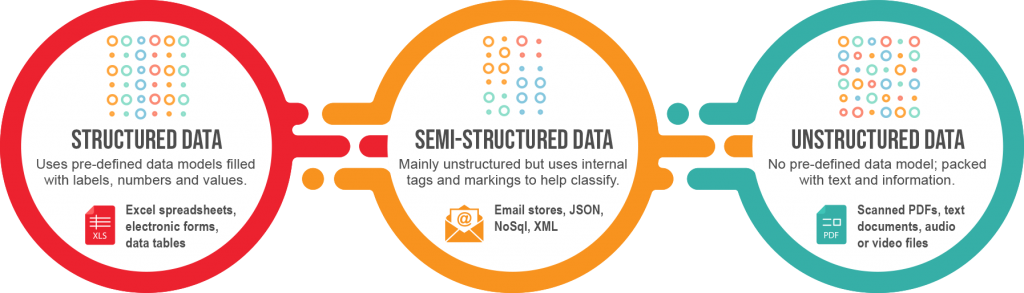

- Structured Data: Data from transactional databases (e.g., SQL Server, MySQL, Oracle) and it uses pre-defined data models filled with labels, numbers and values and is stored in relational databases (RDBMS), CRM, ERP systems, and Excel/CSV files.

- Semi-Structured Data: Semi-structured data is data in between structured and unstructured as it is mainly unstructured but it also contains internal tags and markings (in the form of metadata) that helps to identify, group and hierarchically organize the data. This native metadata ultimately makes it much easier to process and analyze semi-structured data such as — JSON, XML, Avro, and Parquet data formats from web APIs, logs, or IoT devices.

- Unstructured Data: It comes from scanned PDF files and word processing documents. It is used in natural language processing as it is very challenging for traditional software to easily extract, ingest, process and analyze the data. Unstructured data comes in text documents, rich media (like audio or video files) or even social media posts, and is stored in it’s original format until the data is found / recognized and extracted such as Text files, audio, video, images, emails, social media, and documents.

- Streaming Data: Real-time data feeds from IoT sensors, web activity, or financial transactions (e.g., using Apache Kafka or AWS Kinesis).

Data from these sources is typically brought into the warehouse through ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) processes, enabling batch or real-time ingestion.

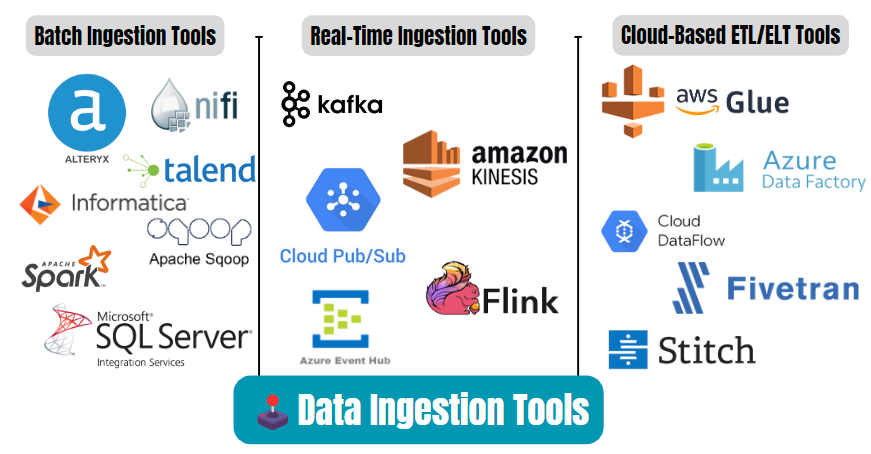

🕹️Data Ingestion Tools : This layer handles the movement and integration of data from different sources. Common tools include:

- Batch Ingestion Tools: Batching is when data is ingested in discrete chunks at periodic intervals rather than collected immediately as it is generated. The ingestion process waits until the assigned amount of time has elapsed before transmitting the data from original source to storage such as Apache NiFi, Talend, Informatica, Apache Sqoop.

- Real-Time Ingestion Tools: Ingestion occurs in real-time, where each data point is imported immediately as the source creates it. The data is made available for processing as soon as it is needed to facilitate real-time analytics and decision-making. Real-time ingestion is also called streaming or stream processing, here is the example of tools such as Apache Kafka, AWS Kinesis, Google Cloud Pub/Sub, Apache Flink.

- Cloud-Based ETL/ELT Tools: Cloud-based ETL/ELT tools are services that help extract, transform, and load (ETL) or extract, load, and transform (ELT) data across various sources, making it easier to integrate and prepare data for analytics. These tools are designed for use in cloud environments, offering scalability, flexibility, and ease of use. Here is the example of tools such as AWS Glue, Azure Data Factory, Google Dataflow, Fivetran, Stitch.

- ETL tools like AWS Glue, Talend, and Azure Data Factory are best for more complex data transformations that need to occur before loading into a target system.

- ELT tools like Fivetran, Stitch, and Hevo Data allow raw data to be loaded into cloud data warehouses first, then transformed within the destination system.

- Cloud-based tools are scalable, flexible, and highly automated, making them perfect for managing the increasing volume and complexity of modern data pipelines.

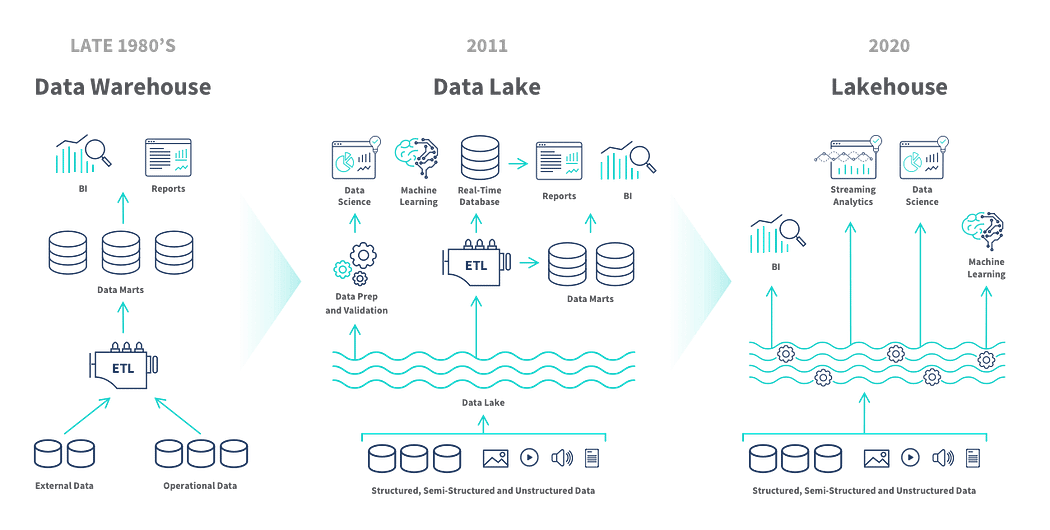

🕹️ Storage Layer (Data Lake and Data Warehouse): In modern architectures, the storage layer consists of both a data lake and a data warehouse, forming a data lakehouse architecture.

- Data Lake: Stores raw, unstructured, and semi-structured data (e.g., Hadoop HDFS, AWS S3, Azure Data Lake Storage, Google Cloud Storage).

- Data Warehouse: Stores cleaned and structured data for analytical queries. This is where traditional analytics happens (e.g., Amazon Redshift, Snowflake, Google BigQuery, Azure Synapse).

- Data Lakehouse: A combination of data lake and data warehouse capabilities, enabling processing and analytics of both structured and unstructured data. Examples include Databricks Lakehouse Platform and Delta Lake.

🕹️Data Processing Layer : This layer is responsible for transforming, cleaning, and preparing data for analytics, machine learning, and reporting. Key technologies include:

- Batch Processing: Apache Spark, Apache Hive, Databricks.

- Real-Time/Stream Processing: Apache Kafka Streams, Apache Flink, Apache Storm, AWS Kinesis Analytics.

- ETL/ELT Pipelines: Data transformation tools (AWS Glue, dbt, Apache Airflow for orchestration).

🕹️Metadata Management and Data Governance : Modern data warehouses incorporate metadata management, data cataloging, and data governance to ensure data integrity, quality, and compliance with regulations (like GDPR, CCPA).

- Data Catalogs: Tools like AWS Glue Data Catalog, Azure Purview, and Google Data Catalog track metadata across datasets, making it easier to find and use data.

- Data Governance: Ensures data quality, security, and access control (e.g., Apache Atlas, Collibra, Alation).

🕹️Analytical and Query Layer : This layer provides the capabilities to query, analyze, and explore the data stored in the warehouse. It includes:

- SQL Query Engines: SQL-based querying on both data warehouses and data lakes (Presto, Trino, Google BigQuery, Snowflake).

- OLAP Engines: For fast analytical queries, Online Analytical Processing engines (like Apache Druid, ClickHouse) are used.

- ML and AI: Platforms like Databricks, H2O.ai, or AWS Sagemaker enable machine learning models on the warehouse data.

🕹️Business Intelligence (BI) and Reporting Layer : This layer allows business users and analysts to visualize and derive insights from the data:

- BI Tools: Power BI, Tableau, Looker, Qlik, Google Data Studio.

- Dashboards: Create interactive dashboards to monitor KPIs and business metrics in real time.

- Ad-hoc Analysis: Enables users to run custom queries and reports on warehouse data.

🕹️ Security and Compliance : Data security is a critical component of modern data warehouses, involving:

- Encryption: Data encryption both at rest and in transit.

- Access Control: Role-Based Access Control (RBAC) and Identity Access Management (IAM) for managing who can access and modify data (e.g., AWS IAM, Azure AD, Google IAM).

- Auditing: Track access and changes to ensure compliance with regulations.

🕹️ Cloud Integration and Scalability: The modern data warehouse is typically cloud-based or hybrid, with cloud platforms offering:

- Scalability: Ability to scale up or down resources (compute, storage) based on demand.

- Serverless Architectures: Elastic compute without the need for infrastructure management (e.g., AWS Redshift Spectrum, Google BigQuery, Snowflake).

- Integration with Cloud Services: Built-in connectors to services like AWS Lambda, Azure Functions, Google Cloud Functions for triggering events.

🕹️ Monitoring and Performance Optimization : This layer ensures the performance, reliability, and health of the data warehouse environment:

- Monitoring Tools: CloudWatch (AWS), Azure Monitor, and Google Cloud Monitoring are used for tracking resource usage, query performance, and failure alerts.

- Optimization: Query optimization, partitioning, caching, and indexing techniques to improve query speed and reduce costs.

✌️Key Benefits of a Hybrid Modern Data Warehouse

- Flexibility: Allows organizations to move workloads between on-premise and cloud as needed.

- Cost Efficiency: Optimizes costs by leveraging the scalability of cloud resources while maintaining control over sensitive on-premise data.

- Scalability: Cloud components can scale automatically with demand, while on-premise systems provide stability for legacy applications.

- Data Sovereignty: Ensures data stays on-premise for sensitive use cases while leveraging cloud for scalability and flexibility.

✌️Sample Workflow for a Hybrid Data Warehouse:

- Ingest: Raw data from multiple sources is ingested into cloud and on-premise storage systems using ETL tools like Talend or AWS Glue.

- Store: Data is stored in a combination of on-premise databases and cloud data lakes or warehouses.

- Process: Both batch and real-time data processing pipelines process data using Apache Spark, AWS Glue, or Azure Data Factory.

- Analyze: BI tools like Power BI or Tableau fetch processed data from both environments for insights.

- Monitor: Pipeline performance and system health are monitored using tools like AWS CloudWatch or Azure Monitor.

🛫 Summary of Modern Data Warehouse Architecture 🛫

🚀Data Sources: Structured, semi-structured, unstructured, and streaming data.

🚀Ingestion Tools: ETL/ELT pipelines (batch and real-time).

🚀Storage Layer: Data lake, data warehouse, or data lakehouse architecture.

🚀Data Processing Layer: Data transformation and analytics (batch and real-time processing).

🚀Metadata Management & Governance: Data cataloging and data quality enforcement.

🚀ML Analytics & Querying: Query engines, OLAP, machine learning.

🚀BI & Reporting: Dashboards, reports, visualization, and ad-hoc analysis.

🚀Security and Compliance: Encryption, access control, and compliance.

🚀Cloud Infrastructure: Elasticity, integration, and serverless computing.

🚀Monitoring & Optimization: Performance tuning, monitoring, and resource optimization.

This architecture provides the scalability, flexibility, and performance required for modern data analytics, supporting real-time insights, machine learning, and big data processing across various industries.

By leveraging both on-premise and cloud infrastructures, organizations can create a modern data warehouse that maximizes performance, agility, and scalability, while ensuring data security and compliance in hybrid environments.

Your support is greatly appreciated! If you found this article valuable, don’t forget to clap👏, follow✌️, and subscribe❤️💬🔔 to stay connected and receive more insightful content. Let’s grow and learn together!